IDIAL Kubernetes Setup Guide

| Version | Date | Author | Change |

|---|---|---|---|

| 1.0 | 25.03.2026 | Maximilian Wilke (BxC Security) | First Version |

This document provides the formal installation and deployment procedure for the IDIAL platform on a Kubernetes cluster. It covers the initial cluster setup, installation of the required infrastructure components, deployment of the IDIAL application, service access, failover expectations, and troubleshooting guidance.

The guide is intended for administrators responsible for preparing and operating the target Kubernetes environment. All command blocks and configuration examples must be executed exactly as shown.

1. General

1.1 Introduction

IDIAL runs on a bare-metal Kubernetes cluster consisting of one control-plane node and two worker nodes. The infrastructure stack consists of:

- containerd as the container runtime

- Flannel as the CNI plugin (pod CIDR

10.244.0.0/16) - Longhorn for distributed, replicated persistent storage

- MetalLB for LoadBalancer IP assignment on bare metal

- nginx-ingress as the single TLS entry point

2. Base Preparation

Begin by updating the package index and upgrading all installed packages on every node. Then install the container runtime.

sudo apt update -y && sudo apt upgrade -y

sudo apt install -y containerd

Configure containerd by creating the required configuration directory and generating the default configuration file.

sudo mkdir -p /etc/containerd

containerd config default | sudo tee /etc/containerd/config.toml

In /etc/containerd/config.toml, change SystemdCgroup from false to true in order to align the container runtime with Kubernetes systemd-based cgroup management.

sudo vim /etc/containerd/config.toml

# Find: SystemdCgroup = false

# Change: SystemdCgroup = true

Load the kernel modules required by Kubernetes networking.

sudo vim /etc/modules-load.d/k8s.conf

Add the following content:

overlay

br_netfilter

Configure the required network-related kernel parameters.

sudo vim /etc/sysctl.conf

Add or uncomment the following lines:

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

Reboot the node to ensure all configuration changes and kernel settings are applied.

2.1 Installation of Kubernetes Packages

Install the prerequisite packages required to access the Kubernetes package repository. Then add the Kubernetes signing key and repository, update the package index, and install the Kubernetes node components. This must be done on all nodes.

sudo apt-get install -y apt-transport-https ca-certificates curl gpg

curl -fsSL https://pkgs.k8s.io/core:/stable:/v1.35/deb/Release.key \

| sudo gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

echo 'deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] \

https://pkgs.k8s.io/core:/stable:/v1.35/deb/ /' \

| sudo tee /etc/apt/sources.list.d/kubernetes.list

sudo apt-get update

sudo apt-get install -y kubelet kubeadm kubectl

sudo apt-mark hold kubelet kubeadm kubectl

2.2 Initialization of the Control Plane

Initialize the Kubernetes control plane on the master node using the specified control-plane endpoint, node name, and pod network CIDR.

sudo kubeadm init \

--control-plane-endpoint=<MASTER-IP-ADDRESS> \

--node-name k8s-idial-master-orange \

--pod-network-cidr=10.244.0.0/16

After initialization, configure kubectl access for the current user.

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Install the Flannel CNI plugin to enable pod networking within the cluster.

kubectl apply -f https://raw.githubusercontent.com/flannel-io/flannel/master/Documentation/kube-flannel.yml

Verify that all system pods are operational.

kubectl get pods --all-namespaces

2.3 Joining the Worker Nodes

Generate the worker node join command on the master node.

kubeadm token create --print-join-command

Execute the generated output on each worker node in order to join the cluster.

sudo kubeadm join <MASTER-IP-ADDRESS>:6443 --token <TOKEN> \

--discovery-token-ca-cert-hash sha256:<HASH>

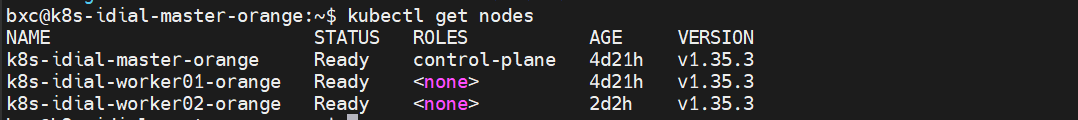

After all worker nodes have joined, verify the cluster node status from the master node.

kubectl get nodes

Expected output:

2.4 Preparation of Worker Nodes for Longhorn

Only needed if you plan to use Longhorn with volume replication for failover. Skip this step for a single-worker setup.

Install the packages required by Longhorn and enable the iSCSI daemon.

sudo apt-get install -y open-iscsi nfs-common

sudo systemctl enable --now iscsid

To prevent conflicts between multipathd and Longhorn block devices, apply the following configuration and restart the service.

cat << 'EOF' | sudo tee /etc/multipath.conf

defaults {

user_friendly_names yes

}

blacklist {

devnode "^sd[a-z0-9]+"

}

EOF

sudo systemctl restart multipathd

3. Infrastructure Components

3.1 Longhorn – Distributed Storage

Only deploy Longhorn if failover with replicated volumes is required. Otherwise skip to 3.2 MetalLB.

Deploy Longhorn into the cluster.

kubectl apply -f \

https://raw.githubusercontent.com/longhorn/longhorn/v1.7.2/deploy/longhorn.yaml

# Wait until all Longhorn components are ready (~2–3 min)

kubectl wait --namespace longhorn-system \

--for=condition=ready pod \

--selector=app=longhorn-manager \

--timeout=300s

kubectl get pods -n longhorn-system

Adjust the default Longhorn storage settings to better accommodate environments with smaller disks (optional).

# Reduce minimum free space from 25% to 10%

kubectl patch settings.longhorn.io storage-minimal-available-percentage \

-n longhorn-system --type=merge -p '{"value":"10"}'

# Reduce reserved storage per node from 30% to 10%

kubectl patch settings.longhorn.io storage-reserved-percentage-for-default-disk \

-n longhorn-system --type=merge -p '{"value":"10"}'

# Automatically delete pods on node failure to enable failover

kubectl patch settings.longhorn.io node-down-pod-deletion-policy \

-n longhorn-system --type=merge \

-p '{"value":"delete-both-statefulset-and-deployment-pod"}'

The Longhorn web interface may optionally be accessed locally for monitoring and administration purposes.

kubectl port-forward -n longhorn-system svc/longhorn-frontend 8080:80

3.2 MetalLB

Deploy MetalLB to provide load-balancer functionality in the bare-metal Kubernetes environment.

kubectl apply -f \

https://raw.githubusercontent.com/metallb/metallb/v0.14.9/config/manifests/metallb-native.yaml

kubectl wait --namespace metallb-system \

--for=condition=ready pod \

--selector=app=metallb \

--timeout=120s

Apply the predefined IP address pool configuration.

kubectl apply -f metallb/metallb-config.yaml

The IP range defined in metallb/metallb-config.yaml must be adjusted to match available addresses in the node network before applying.

3.3 NGINX-Ingress Controller

Deploy the nginx ingress controller.

kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.11.3/deploy/static/provider/cloud/deploy.yaml

kubectl wait --namespace ingress-nginx \

--for=condition=ready pod \

--selector=app.kubernetes.io/component=controller \

--timeout=120s

# Verify external IP – must show an address from the MetalLB pool

kubectl get svc -n ingress-nginx ingress-nginx-controller

For improved availability, scale the controller to two replicas and enforce node-level distribution using pod anti-affinity.

kubectl scale deployment ingress-nginx-controller -n ingress-nginx --replicas=2

kubectl patch deployment ingress-nginx-controller -n ingress-nginx --type=merge -p '{

"spec": {

"template": {

"spec": {

"affinity": {

"podAntiAffinity": {

"requiredDuringSchedulingIgnoredDuringExecution": [{

"labelSelector": {

"matchLabels": {"app.kubernetes.io/component": "controller"}

},

"topologyKey": "kubernetes.io/hostname"

}]

}

}

}

}

}

}'

4. Deployment of IDIAL

4.1 Adjustment of Configuration Files

Set the IDIAL image version (mandatory). To review the available image tags, use the following command:

curl -s -u <DOCKER_USERNAME>:<TOKEN> \

"https://hub.docker.com/v2/repositories/bxc2security/idial/tags/?page_size=25" \

| python3 -c "import sys,json; [print(t['name']) for t in json.load(sys.stdin)['results']]"

Specify a stable image version in 05-idial.yaml:

image: docker.io/bxc2security/idial:<STABLE_VERSION>

Update the application secrets in 02-app-secrets.yaml:

SECRET_KEYmust be set to a secure random value.PKCS8_PWmust match the password used during certificate generation.

4.2 Creation of Namespace and Registry Credentials

Create the target namespace and then create the Docker registry secret required for image pulls.

kubectl apply -f 00-namespace.yaml

kubectl create secret docker-registry registry-credentials \

--docker-server=https://index.docker.io/v1/ \

--docker-username=<DOCKER_USERNAME> \

--docker-password=<DOCKER_PASSWORD_OR_ACCESS_TOKEN> \

--docker-email=<EMAIL> \

-n idial

4.3 Creation of the TLS Secret for Ingress

Generate a self-signed wildcard certificate for *.company.local, create the Kubernetes TLS secret, and remove the local certificate files afterward.

You can also use your own certificate. In that case, skip the openssl command and create the secret directly from your existing .crt and .key files.

openssl req -x509 -nodes -days 365 -newkey rsa:2048 \

-keyout idial-ingress.key \

-out idial-ingress.crt \

-subj "/CN=*.company.local/O=Company" \

-addext "subjectAltName=DNS:idial.company.local,DNS:idial-api.company.local,DNS:ua.company.local,DNS:db.company.local"

kubectl create secret tls idial-ingress-tls \

--cert=idial-ingress.crt \

--key=idial-ingress.key \

-n idial

rm idial-ingress.key idial-ingress.crt

4.4 Deployment of All Application Components

Deploy the complete application stack using the kustomization in the current directory.

kubectl apply -k .

Monitor the deployment progress until all pods are running and all persistent volume claims are bound.

kubectl get pods -n idial -w

kubectl get pvc -n idial # All PVCs must be Bound

kubectl get ingress -n idial

4.5 DNS & Accessing the Services

After successful deployment, create A records pointing to the MetalLB VIP. Example:

| Hostname | IP |

|---|---|

idial.bxc.local | 10.10.10.230 |

idial-api.bxc.local | 10.10.10.230 |

ua.bxc.local | 10.10.10.230 |

Alternative – local testing via hosts file:

<METALLB-VIP> idial.company.local idial-api.company.local ua.company.local

- Linux / macOS:

/etc/hosts - Windows:

C:\Windows\System32\drivers\etc\hosts

4.6 Accessing the Services

| Service | URL |

|---|---|

| IDIAL Web UI | https://idial.bxc.local |

| IDIAL REST API | https://idial-api.bxc.local |

| OPC UA Expert (VNC) | https://ua.bxc.local |

To confirm the MetalLB virtual IP assignment, run:

kubectl get svc -n ingress-nginx ingress-nginx-controller

5. Failover Behavior

The environment operates with two worker nodes and Longhorn volume replication using two copies per volume. If one worker node fails, workloads are expected to restart automatically on the remaining worker node.

Expected failover timeline:

| Phase | Duration |

|---|---|

Node becomes NotReady | ~40 s |

| Pods are evicted | ~5 min (Kubernetes default) |

| Longhorn volumes reattach | ~60 s |

| Pods start on Worker 2 | ~30 s |

| Total | ~7 minutes |

Failover test:

# Watch from the master while shutting down one node:

kubectl get pods -n idial -o wide -w

Manual recovery procedure if pods remain stuck:

# Force-delete stuck Terminating pods

kubectl delete pods -n idial --all --force --grace-period=0

# Release Longhorn volumes stuck on the failed node

kubectl get volumes.longhorn.io -n longhorn-system -o json | \

python3 -c "

import sys, json, subprocess

data = json.load(sys.stdin)

for v in data['items']:

if v['status'].get('currentNodeID') == 'k8s-idial-worker01-orange':

name = v['metadata']['name']

subprocess.run([

'kubectl', '-n', 'longhorn-system', 'patch', 'volume.longhorn.io', name,

'--type=merge', '--patch', '{\"spec\":{\"nodeID\":\"\",\"migrationNodeID\":\"\"}}'

])

"

6. Troubleshooting

Diagnostic Commands

# Pod details (e.g. for Pending or CrashLoop)

kubectl describe pod -n idial <POD_NAME>

# Application logs

kubectl logs -n idial deployment/idial

kubectl logs -n idial deployment/idial-web-backend

# Longhorn volume status

kubectl get volumes.longhorn.io -n longhorn-system

# Ingress controller logs

kubectl logs -n ingress-nginx deployment/ingress-nginx-controller

# Events in the namespace

kubectl get events -n idial --sort-by='.lastTimestamp'

# MetalLB status

kubectl get ipaddresspool -n metallb-system

kubectl get l2advertisement -n metallb-system

Common Symptoms

| Symptom | Likely Cause | Solution |

|---|---|---|

Pod Pending | Longhorn volume not ready | kubectl get pods -n longhorn-system |

ImagePullBackOff | Registry credentials missing or wrong | Redo step 4.2 |

PVC Pending | Longhorn not ready or DiskPressure | kubectl get nodes.longhorn.io -n longhorn-system |

| Longhorn volume faulted | DiskPressure – not enough free space | Set storage-minimal-available-percentage to 10 |

CrashLoopBackOff (idial) | Wrong image tag (latest is broken) | Set a stable tag in 05-idial.yaml |

Ingress EXTERNAL-IP: <pending> | MetalLB not installed or no IP pool | Check step 3.2 |

503 Service Unavailable | Backend pod not ready | kubectl get pods -n idial |

502 Bad Gateway after failover | Pods not yet started on Worker 2 | Wait (~7 min) or force-delete stuck pods |

Longhorn manager 1/2 | multipathd conflict on worker | Apply /etc/multipath.conf fix (step 2.4) |

Volume stuck Attaching | Old pod holding volume (Multi-Attach) | kubectl delete pod <POD> -n idial --force --grace-period=0 |

Non-Migrated Services

The following services from the Docker Compose deployment are not included in the Kubernetes setup:

| Service | Reason |

|---|---|

dockhand | Requires Docker socket — not available with containerd |

status_check | Docker-specific pre-flight check — not needed in K8S |

finish-idial | Interactive Docker Compose hint — not relevant in K8S |